Nonlinear equations Flashcards

What is the big question about non-linear equations?

How do we find the roots of the equations

When there is no explicit formula to find the root(s) what types of methods do we have to use?

Iterative method

What is the first step when trying to find any root?

Plot the graph so you can check your answer

What is the notation for any root?

x*

What is the Intermediate Value Theorem?

What is the Interval bisection algorithm?

If f(a)f(b) < 0 what does this tell you about f?

It changes sign at leats once in [a,b] , so by the IVT theorem there must be a point x* ∈ [a,b] where f(x*) = 0.

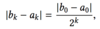

What length does the interval have after k iterations of interval bisection?

What is the error of the midpoint of an interval after k interations of interval bisection?

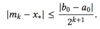

How many iterations do we need to make the error of the midpoint |mk - x*| ≤ δ?

What is the general method for fixed point iteration?

Transform f(x) = 0 into the form g(x) = x, so that a root x* of f is a fixed point of g, meaning g(x*) = x*. To find x*, we start from some initial guess x0 and iterate xk+1 = g(xk) until |xk+1 - xk| is sufficiently small.

What is a problem with fixed point iteration?

Not all rearrangements g(x) = x will converge

A contractiln map f, is a map L ➝ L (for some closed interval) satisfying?

For some λ< 1 and for all x, y ∈ L.

What is the Contraction Mapping Theorem?

Prove the following theorem.

How do you show g is a contraction map?

What is the Local Convergence theorem?

Prove the following theorem.

What do we compare to measure the speed of convergence?

The error |x* - xk+1| to the error at the previous step |x* - xk|

Define linear convergence.

What is λ called?

The rate or ratio

What λ shows superlinear convergence?

0

What is meant by superlinear convergence?

The error decreases ar a faster and faster rate

What is the theorem about liner and superlinear convergence?