General Math Deck Flashcards

(55 cards)

What are the three ways of multiplying vectors, and what are their respective outcomes?

- Multiplying a vector by a scalar (also known as scaling)

- Multiplying two vectors to obtain a scalar (dot product)

- Multiplying two vectors to obtain a new vector (vector product)

What is the expression for the dot product?

It is defined as being the product of the magnitude of the two vectors multiplied by the cosine of the angle 𝜃 between them.

What is the vector product/cross product?

The magnitude of the vector product of two vectors is defined as the product of their magntitudes multiplied by the sine of the angle 𝜃.

The vector product is a vector that acts perpendicular to both vectors A and B.

If you curl your fingers in your right hand in the direction of the first vector A to the second vector B, then the direction of the cross product is given by the thumb.

Before you add matrices, what do both matrices need to have?

They need to have the same dimensions.

What conformability to do need for both the matrices before multiplying?

If there is conformability what are the dimensions of the product matrix?

The column of the ‘lead’ (1st) matrix must be equal to the row of the ‘lag’ (2nd) matrix.

It is equal to the rows of the ‘lead’ matrix and the columns of the ‘lag’ matrix.

If two matrices conform for multiplication, what would be the values be in the product?

Individual values would be equal to sum of the product of the individual values in the row(lead matrix) and columns (lag matrix).

Matrix multiplication commutative and associative?

Not commutative, but is associative and distributive.

Determinants are only defined for…

square matrices.

How is the determinant of a 2x2 matrix determined?

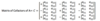

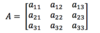

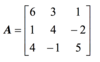

How would you determine the determinant of the this 3x3 matrix?

Using the most intuitive method.

First add the first two columns of the matrix as two new columns 4 and 5 on the right.

All the terms you add are determined by creating diagonals going to the right. Starting from a11 and going to a13 .

All the terms you subtract are determined by going from the left, a12, to a13 .

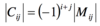

What is the cofactor and how is it defined?

Mij is the minor of the row and column defined.

the -1 raised to the power of the row and column, is the same sign as the minor if the sum of i and j is even. It is the opposite sign if the sum is odd.

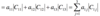

How can the third order determinant be expressed through the laplace expansion ?

Plug in the values for a11 to a13

Determine the cofactors for each of the minors.

What does interchanging any two rows (or columns) do to the value of the determinant?

It will change the sign but not the magnitude.

What does multiplying any row (or column) by a scalar do to the determinant?

It will change the determinant by k-fold.

If one row (or column) is a multiple of another row (or column), what is the value of the determinant?

zero

Under what conditions can an inverse matrix be defined for a matrix?

It can be defined if it is a square matrix.

What does a matrix require to be non-singular?

its rows (or columns) must be linearly independent.

What is the rank of a matrix?

The rank is defined as the maximum number of linearly independent rows or columns in the matrix.

A n*n non-singular matrix is of rank n.

For a m*n the rank can be at most n or m, whichever is smaller.

Another way of determining the rank would be to find out the maximum number of non-vanishing determinants that can be constructed from the rows and the columns of the matrix.

Can you determine the cofactor for each element in a matrix?

Yes.

Whats really important that you need remember when multiplying matrices?

You need to be multiplying across the row of one matrix and the column of another.

One row maintains fixed as you move across the columns of the other matrix.

Keep focused! Its easy to mess it up!

What is the general formula for the inverse of a matrix?

Where the determinant is for the original matrix.

The cofactor of a matrix needs to be transposed to obtain the adjoint.

How do you find the cartesian eq from a vector equation?

Separate all the vector components into their respective cartesian formats.

From the resultant eq, try to combine to obtain one cartesian eq.

What is the scalar product form?

Explain why this is the case.

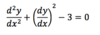

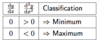

- When the 1st derivate is 0 but the 2nd derivative is positive, it implies that the slope of the gradient is positive, so it is increasing as we move across from left to right. If the gradient is going from negative to positive as we move from left to right, we know that the critical point is a minimum.

- If the 2nd derivative is negative, it implies that the gradient is becoming more negative as we move across from left to right. A gradient decreasing in value happens with maximums.