Why is Brainscape

so effective?

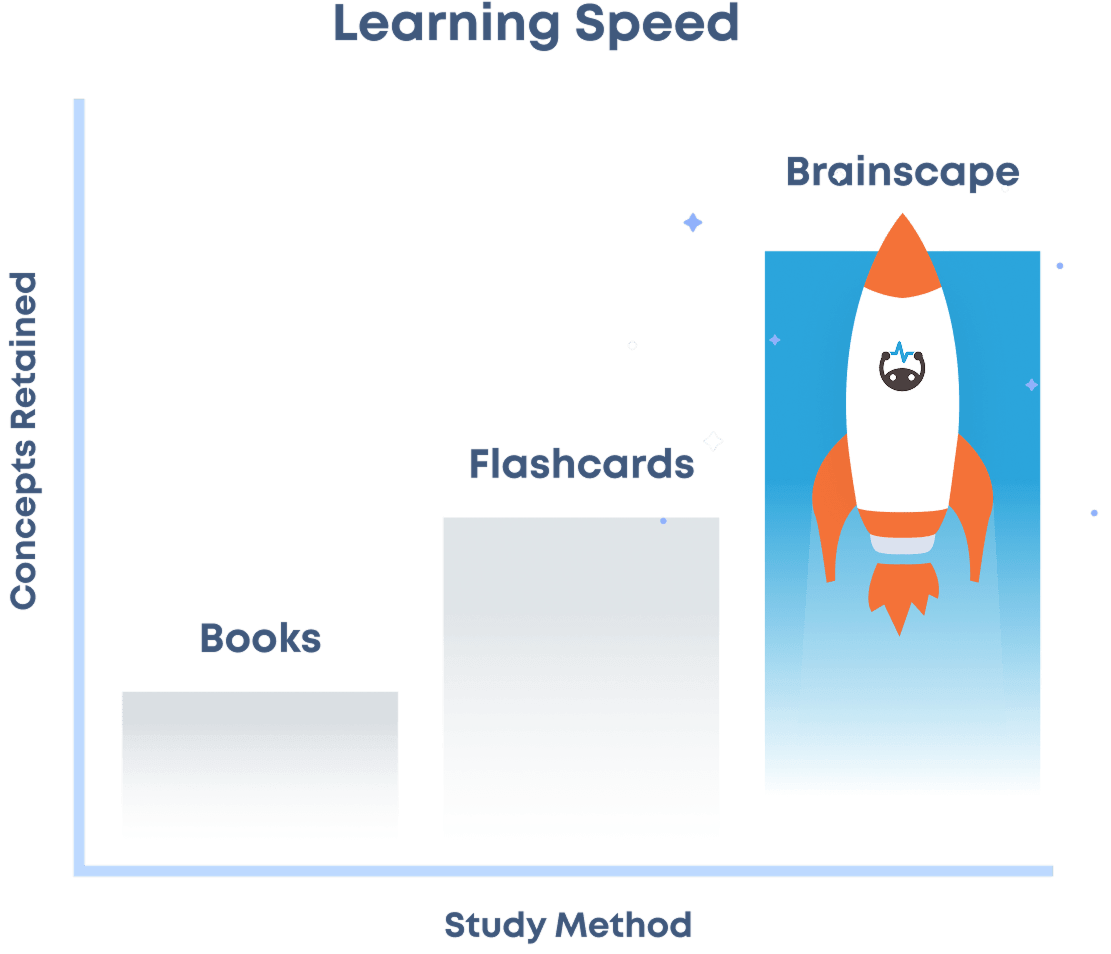

Brainscape uses proven cognitive science to help you (1) learn faster, (2) stay motivated, and (3) build stronger study habits.

11 Million+ users

30 Million+ hours saved

800+ Validating studies

Learn faster.

Activate Your Focus

Every flashcard prompts you to

actively recall

information from memory, the most engaging form of retrieval practice.

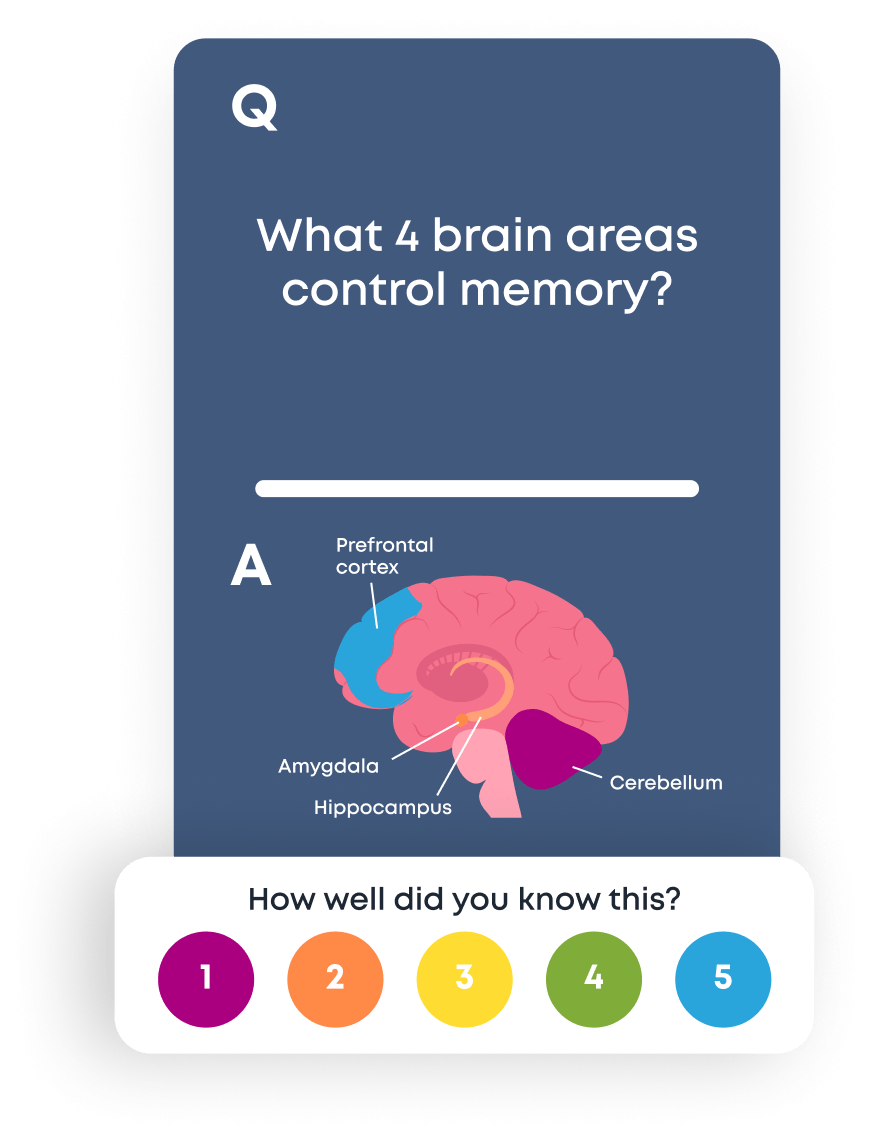

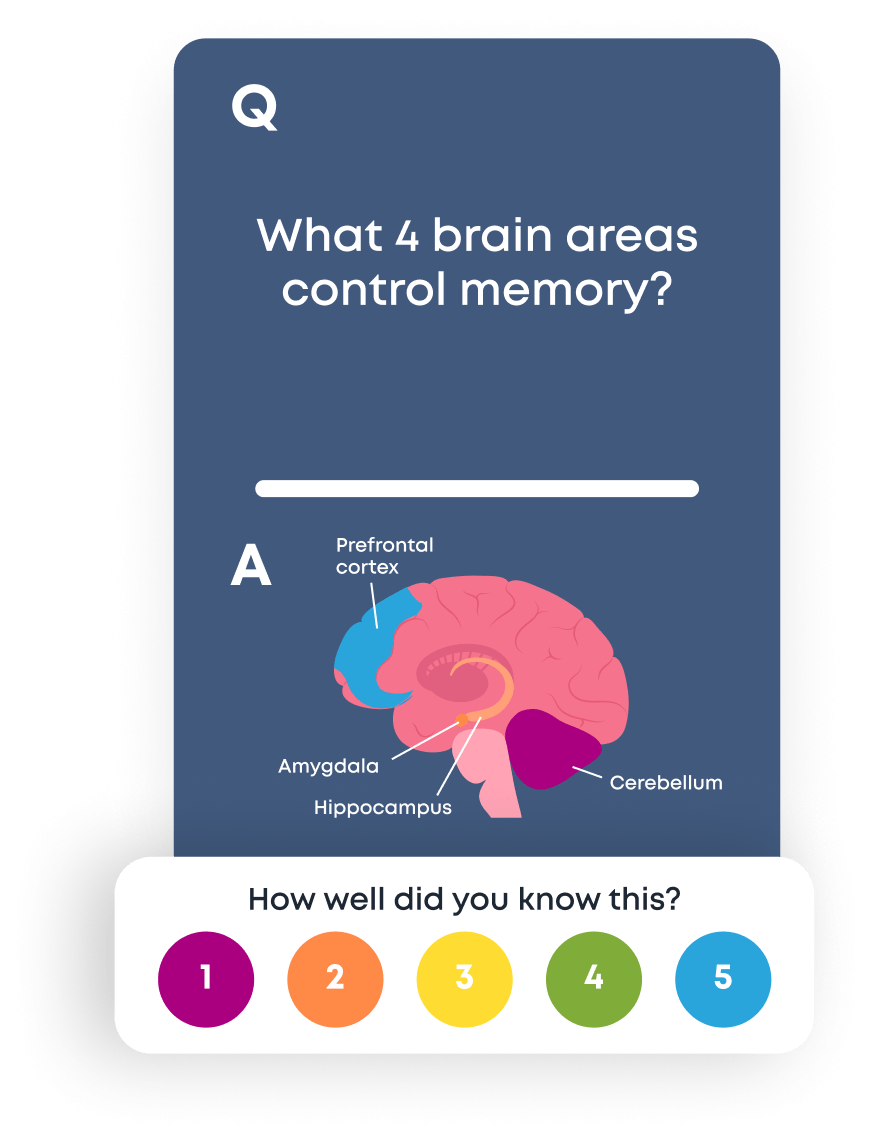

Identify Your Weaknesses

Upon revealing each answer, you rate your confidence from 1 to 5. This

metacognition

deepens memory, making each concept stickier.

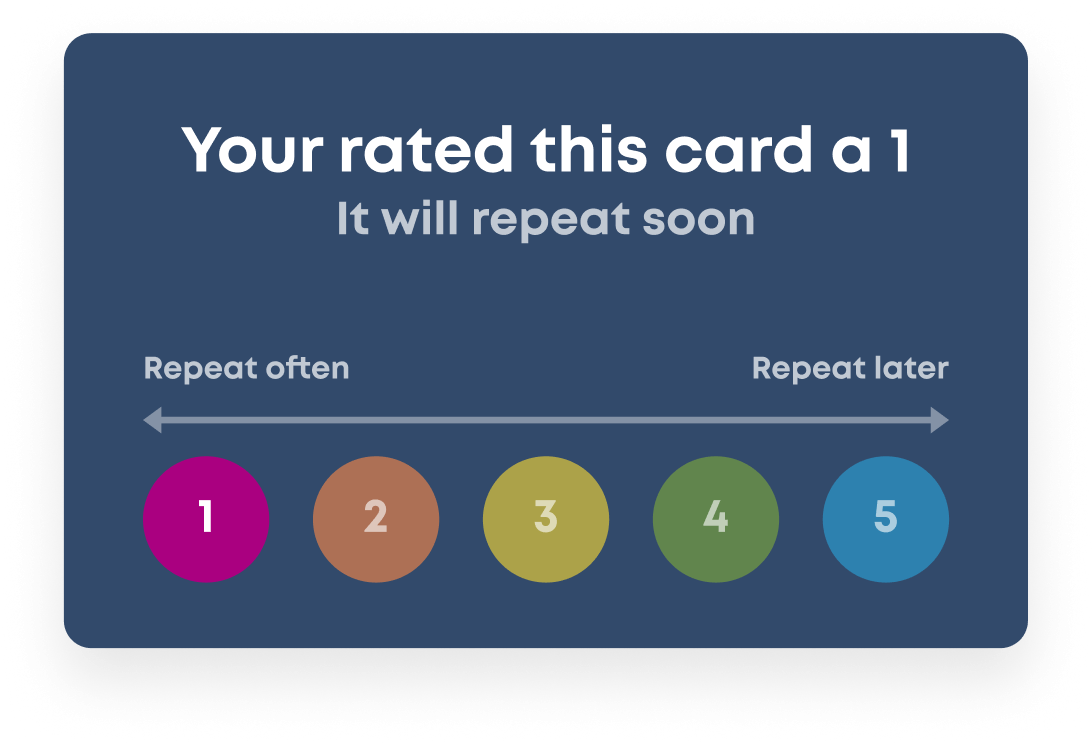

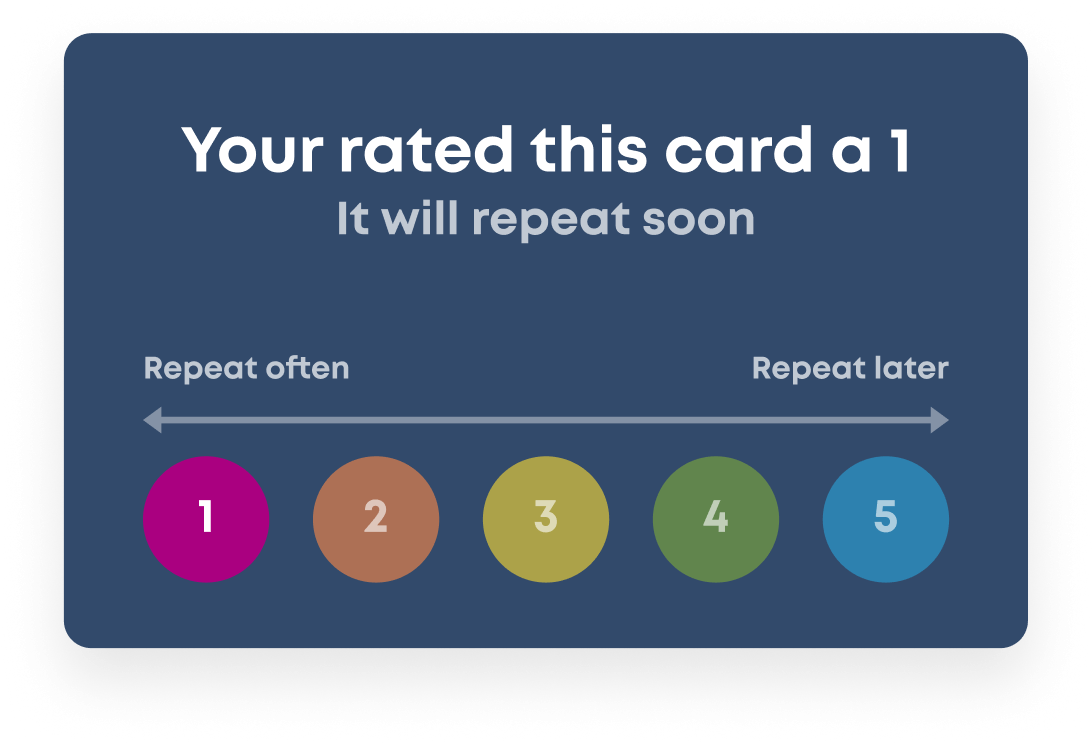

Make Knowledge Permanent

Your confidence ratings inform Brainscape’s

spaced repetition

system, ensuring you’ll review each flashcard right before you’d forget it.

Activate Your Focus

Every flashcard prompts you to

actively recall

information from memory, the most engaging form of retrieval practice.

Identify Your Weaknesses

Upon revealing each answer, you rate your confidence from 1 to 5. This

metacognition

deepens memory, making each concept stickier.

Make Knowledge Permanent

Your confidence ratings inform Brainscape’s

spaced repetition

system, ensuring you’ll review each flashcard right before you’d forget it.

Stay motivated.

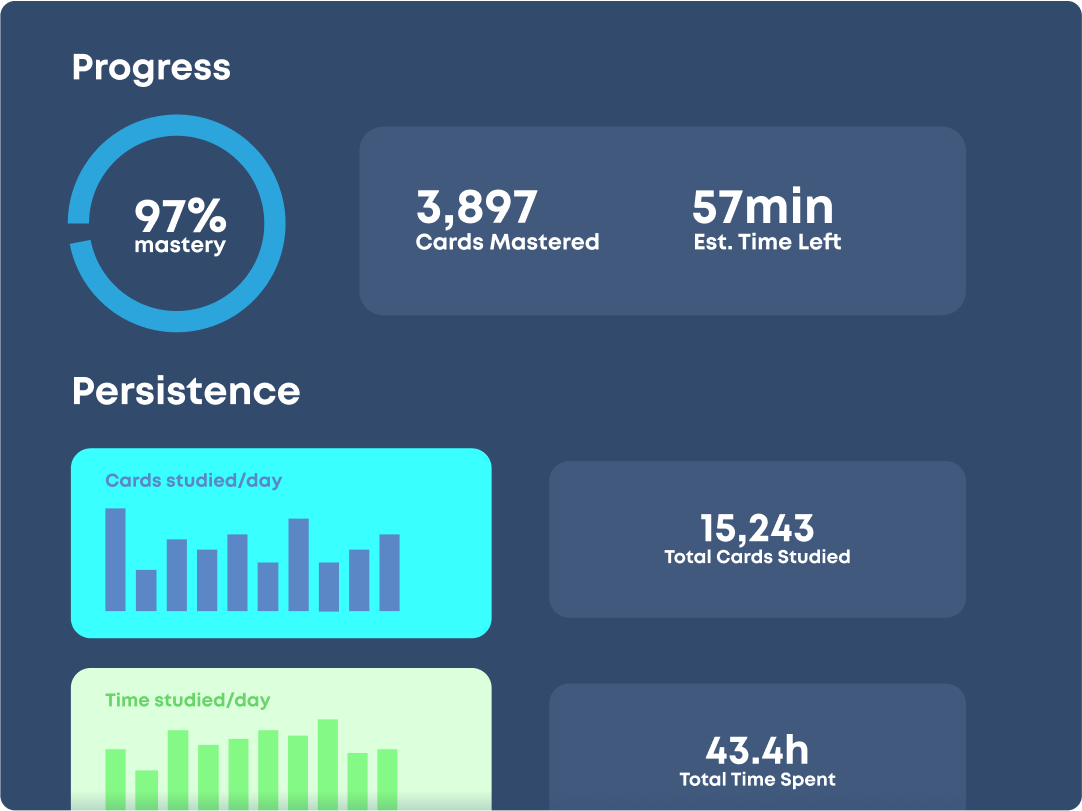

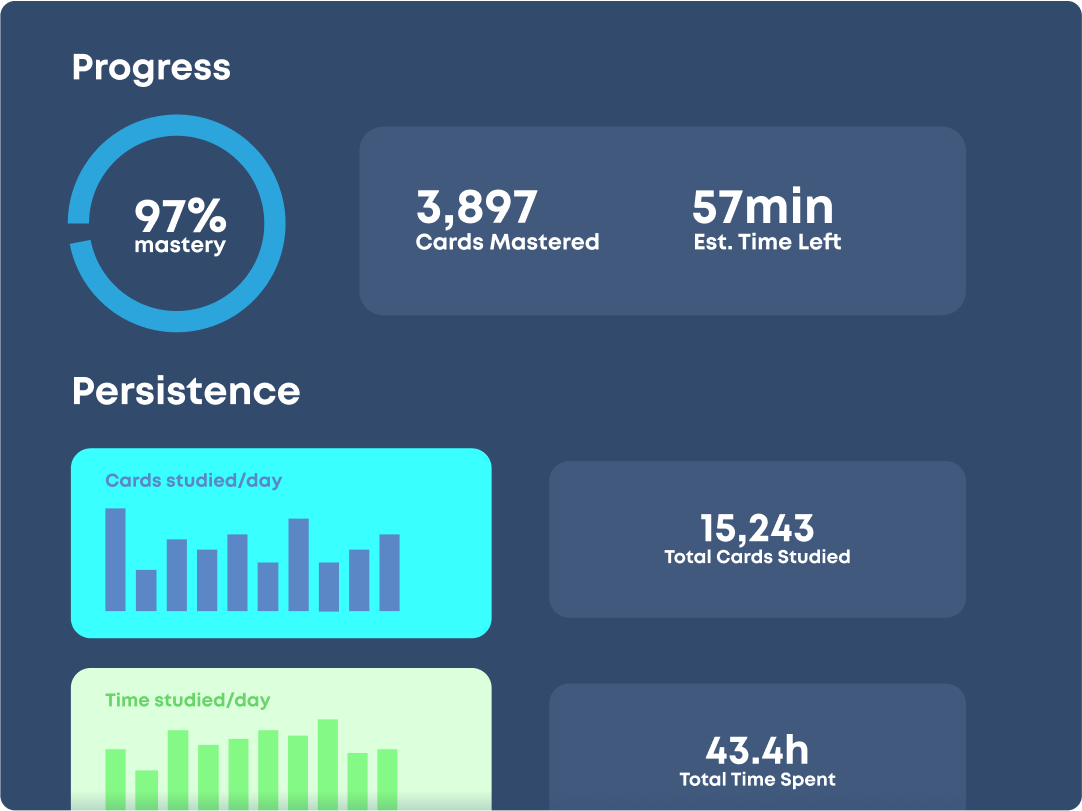

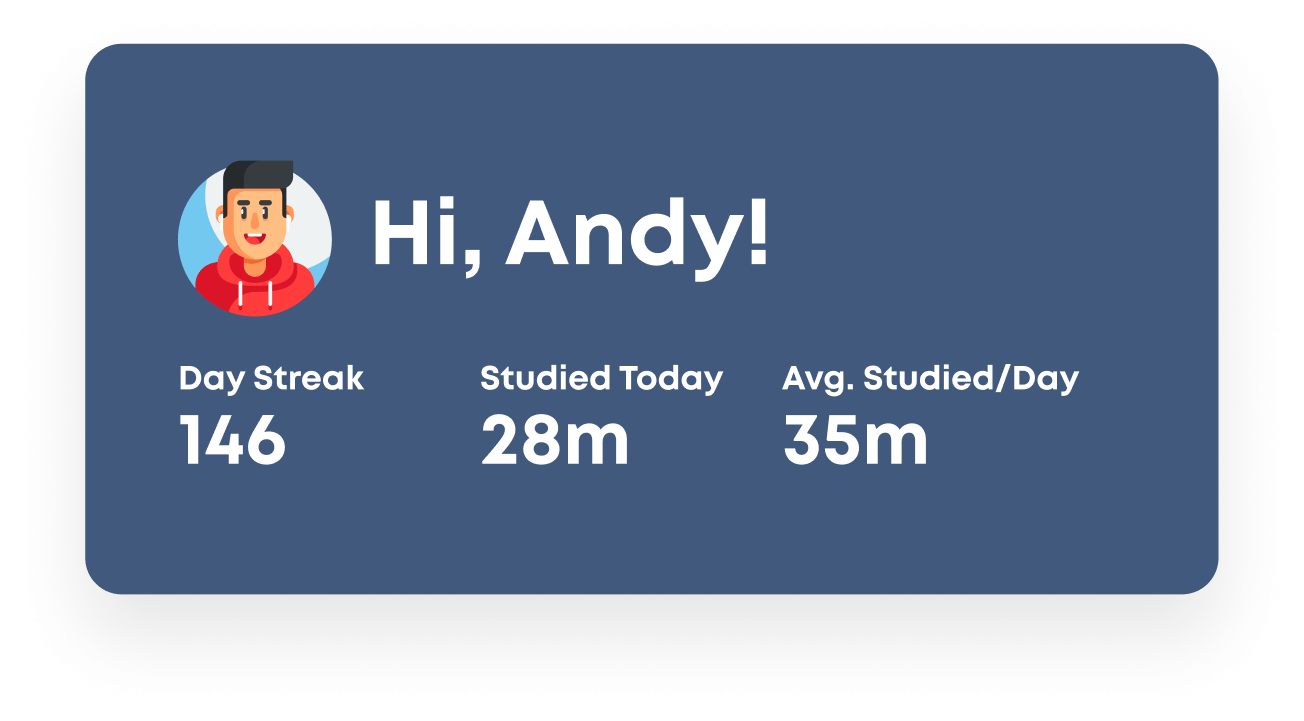

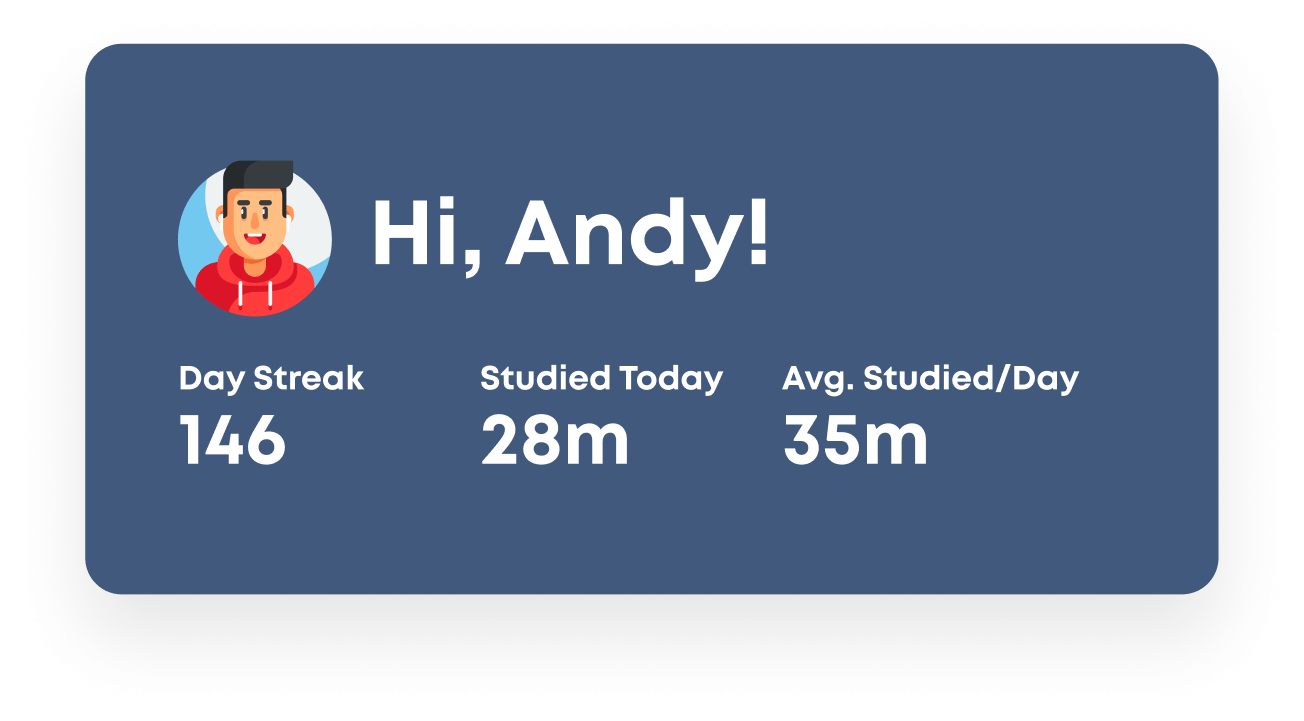

Track Your Progress

Frequent checkpoints chronicle your mastery and

reward your brain

with hits of dopamine.

Meet Every Deadline

The “Estimated Time Left” metric

prevents procrastinating

and ensures that you never run out of time again.

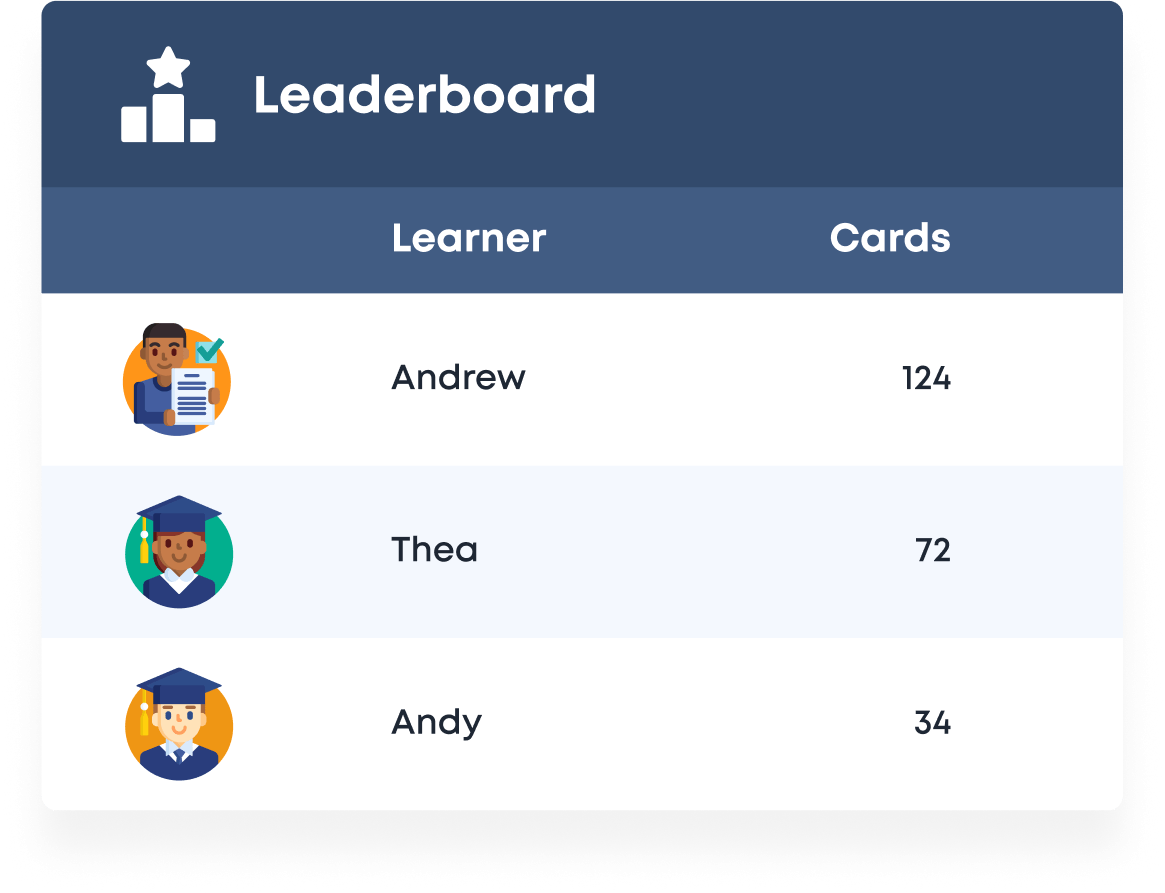

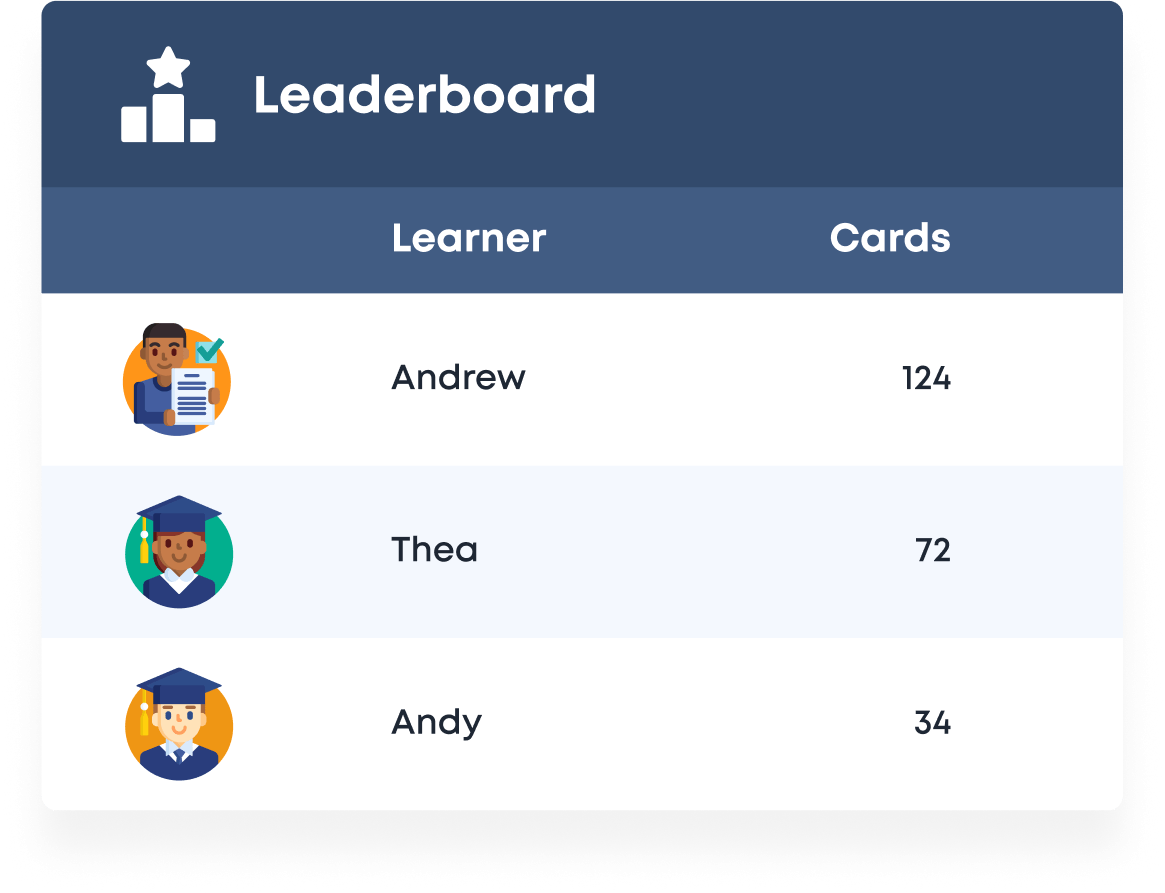

Exploit Peer Pressure

Leaderboards apply the magic of

social motivation theory,

pushing you to be the most consistent studier.

Track Your Progress

Frequent checkpoints chronicle your mastery and

reward your brain

with hits of dopamine.

Meet Every Deadline

The “Estimated Time Left” metric

prevents procrastinating

and ensures that you never run out of time again.

Exploit Peer Pressure

Leaderboards apply the magic of

social motivation theory,

pushing you to be the most consistent studier.

Build strong study habits.

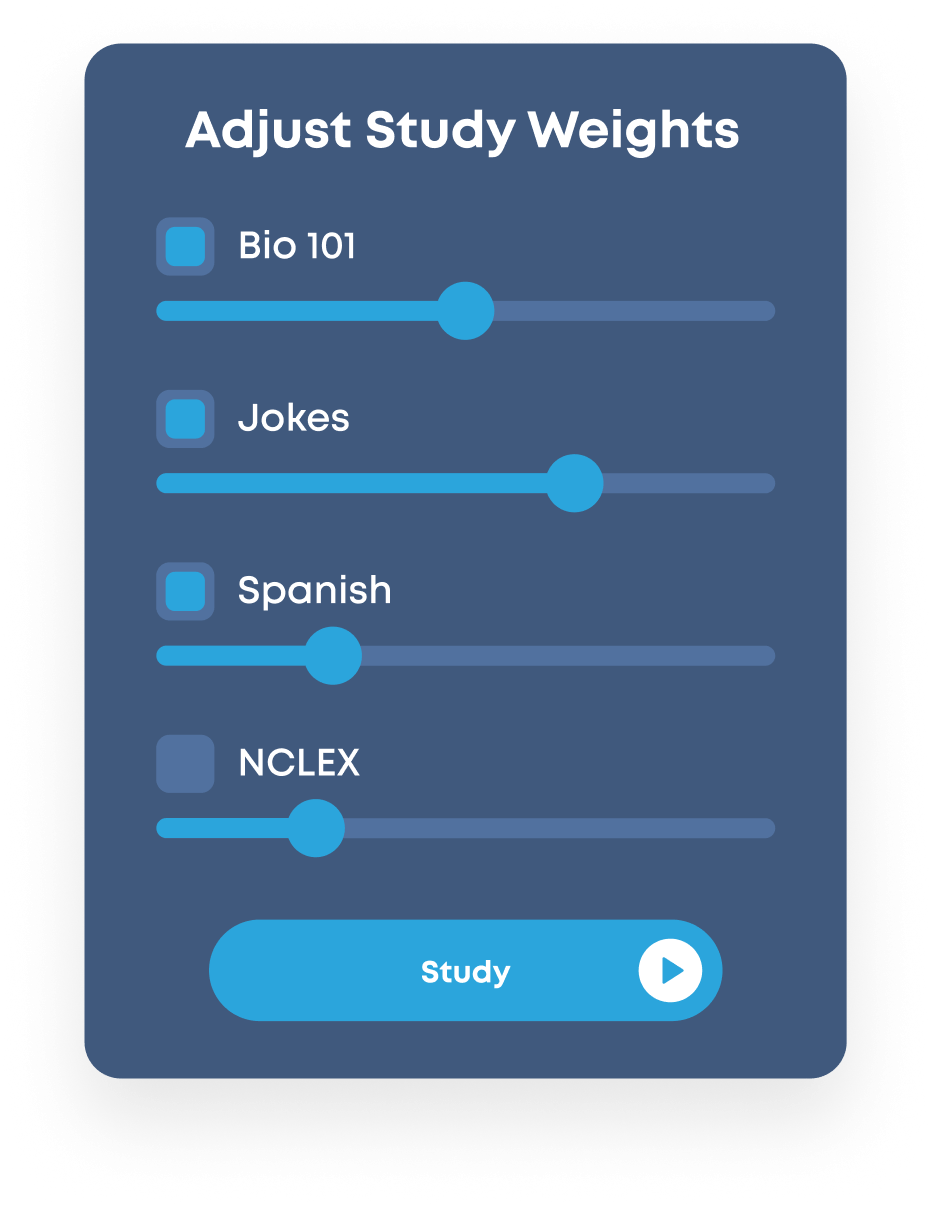

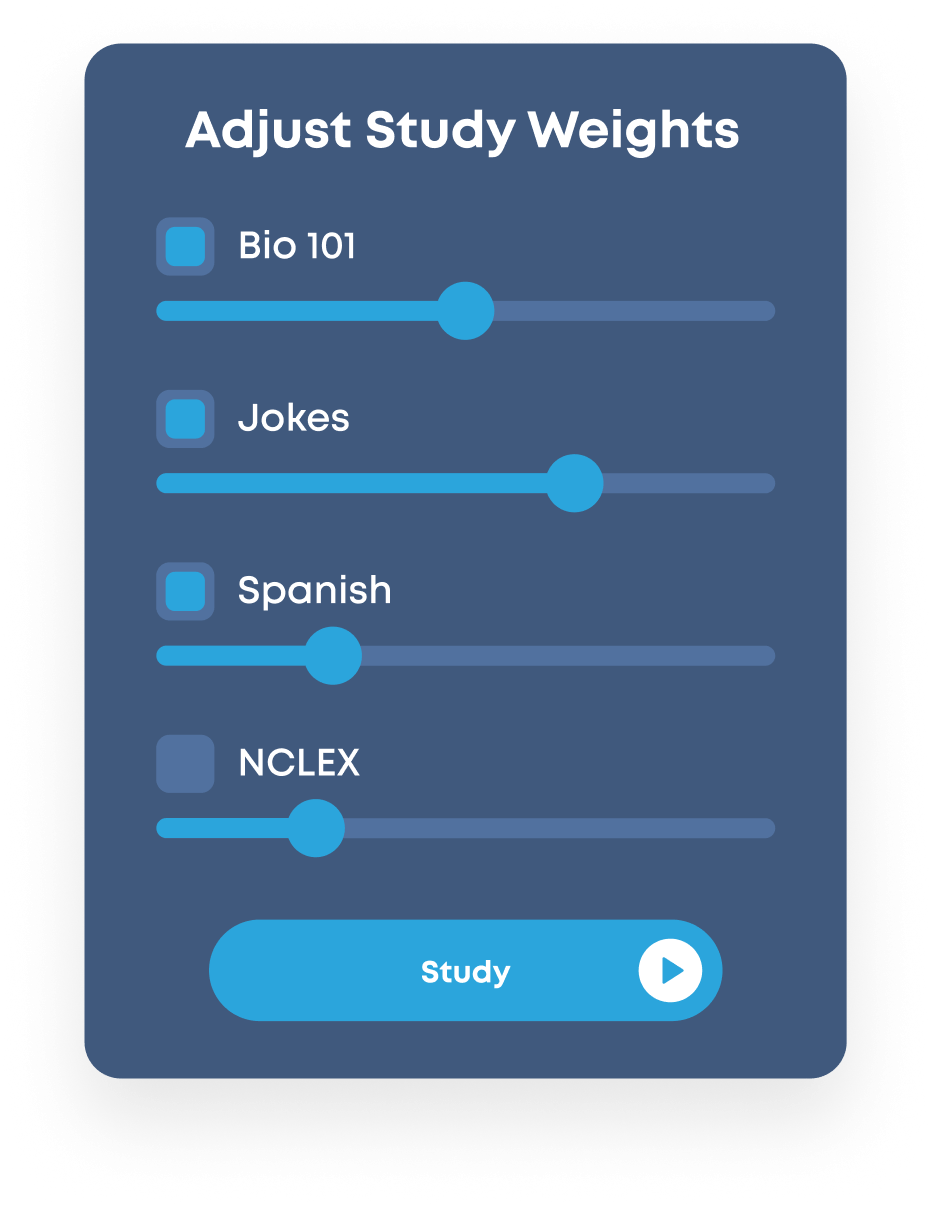

Make Studying Convenient

The “Smart Study” button delivers a curated mix of

interleaved subjects

with just a single tap, eliminating the pain of getting started.

Keep Your Streak Alive

Just one card studied each day is all it takes, encouraging you to be consistent &

build the habit

of studying.

Get Coached Into Shape

Well-timed study reminders prevent you from breaking your habit & falling victim to the dreaded

"what-the-hell" effect.

Make Studying Convenient

The “Smart Study” button delivers a curated mix of

interleaved subjects

with just a single tap, eliminating the pain of getting started.

Keep Your Streak Alive

Just one card studied each day is all it takes, encouraging you to be consistent &

build the habit

of studying.

Get Coached Into Shape

Well-timed study reminders prevent you from breaking your habit & falling victim to the dreaded

"what-the-hell" effect.

Crush Any Learning Goal.

By leveraging your brain’s biological wiring, Brainscape empowers you to study as efficiently and as painlessly as humanly possible.